How we impact

act PROSPERI.AI

Act Digital Corporate Artificial Intelligence

Solutions

Services

Partners

Imovirtual

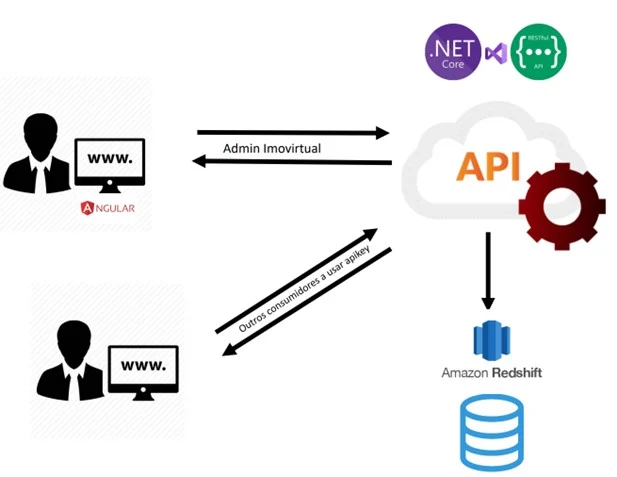

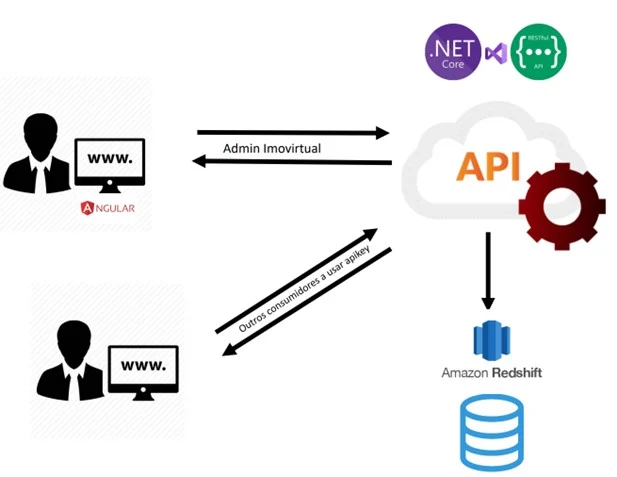

From an architectural point of view, the main challenge was to create a structure capable of handling huge daily amounts of data, while maintaining its integrity and resilience, since there is a very small hourly window to recover this data in the event of a disaster.

The solution involved the use of Microsoft Azure Data Factory, programmed to ingest raw data and subsequently transform it in two ways:

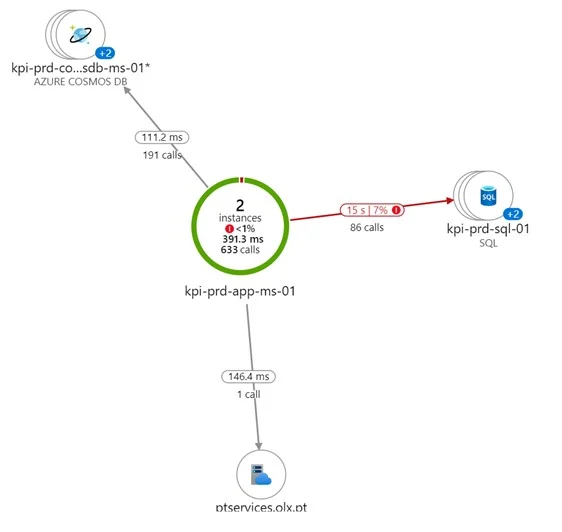

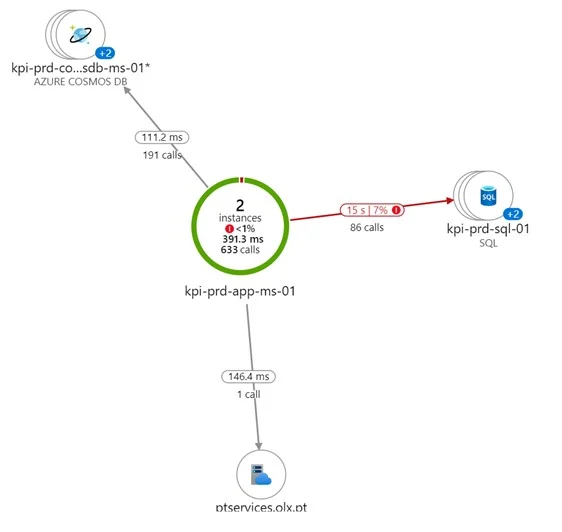

However, that was only part of the challenge. In order to meet expectations and control such a volume of data, the application would need to achieve a high speed when made available to the client. This speed was achieved by using techniques such as in-memory cache.

The cache policy takes into consideration that after the user accesses the platform for the first time, most of its data becomes available in memory and all subsequent accesses do not require the database, making the response highly efficient.

From a security point of view, Imovirtual has strict access and validation rules. In order to deliver this project in production, penetration tests were performed and the solution was adapted to the security policies, by using security vaults to store secrets, passwords and connections to the database.

Note: the daily imports are large in relation to the total amount of data stored, however many tables are crunched daily and recreated. For this reason, the total amount of data stored is not proportional to the daily imports.

Scrum methodology.

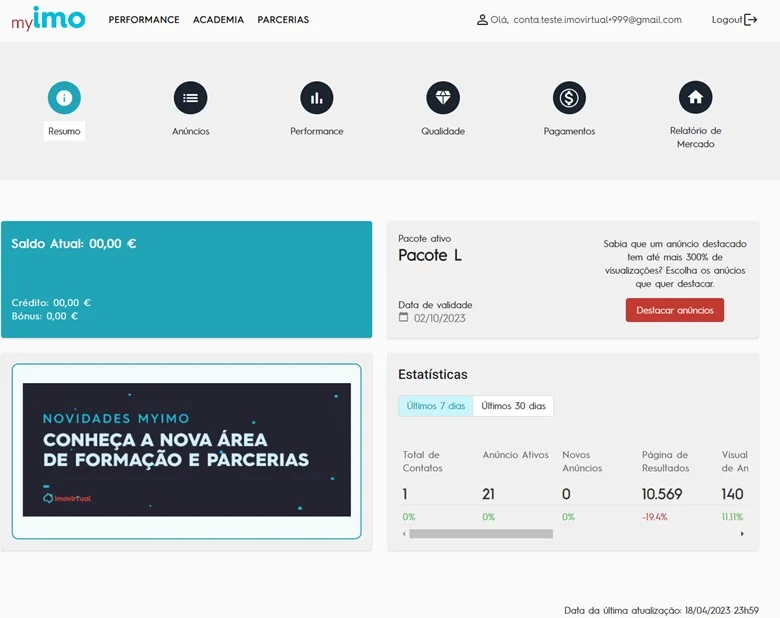

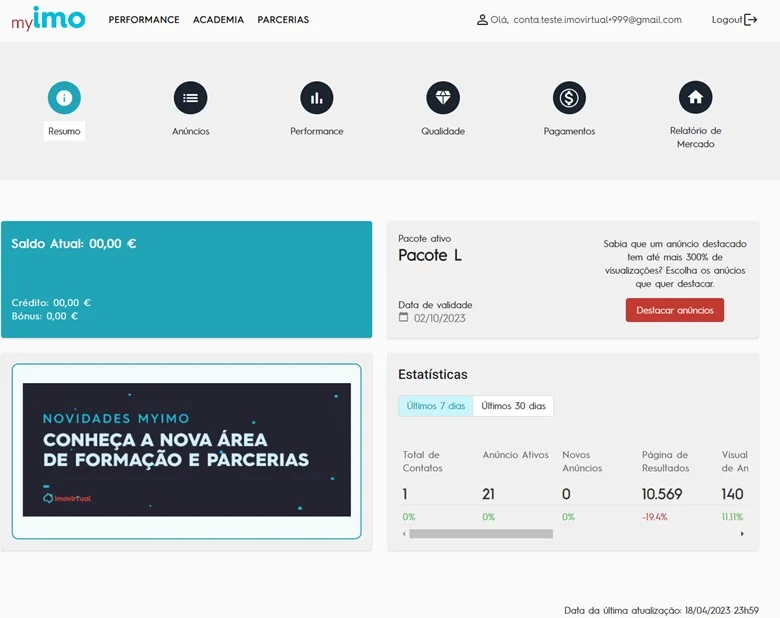

Professional real estate agents now have at their disposal a complete platform where they can see all the statistics of their listings.

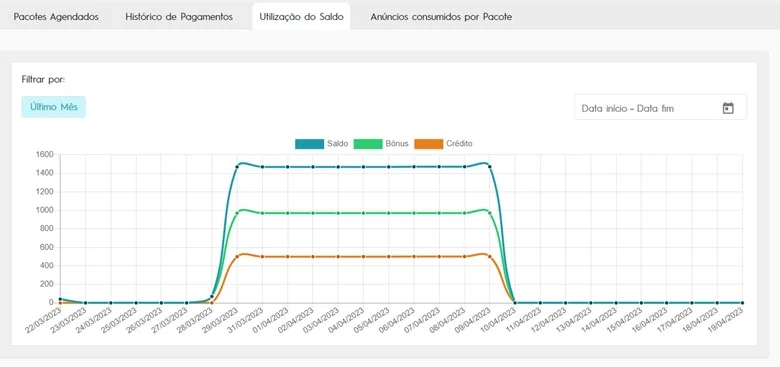

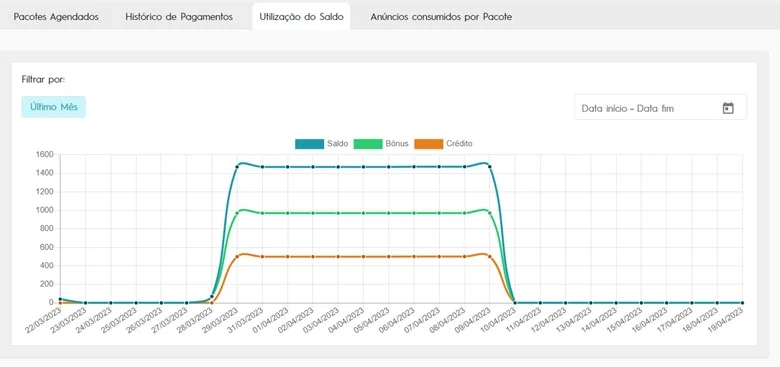

It’s a detailed analysis based on indicators and graphics of historical data, allowing decisions to be made based on performance. It is also possible to obtain the history of payments and investments, as well as official reports of the real estate market per region.

3-months project. The team included:

Homepage:

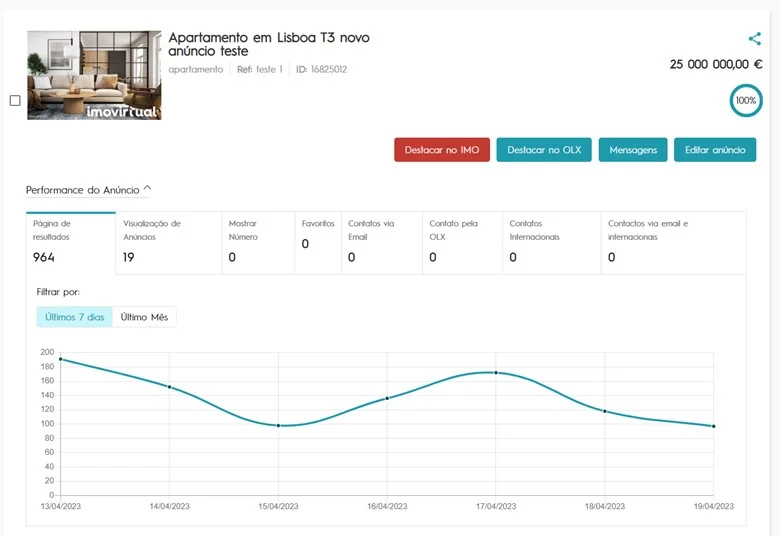

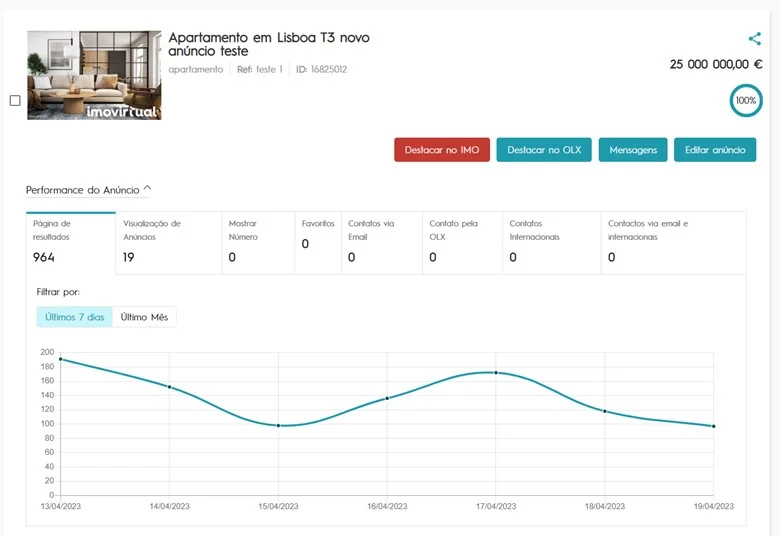

Ads performance:

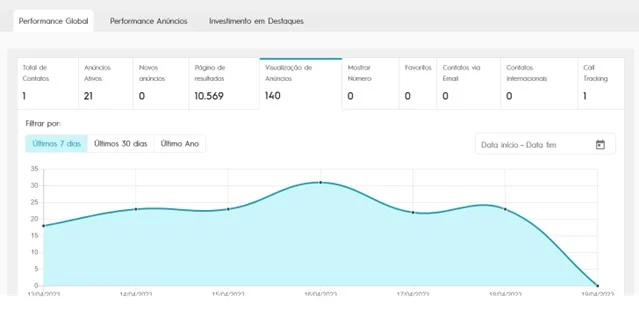

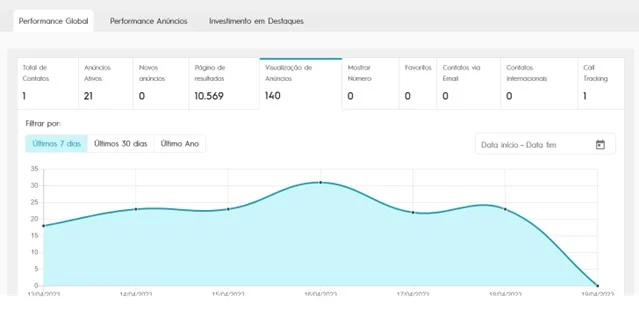

Overall performance:

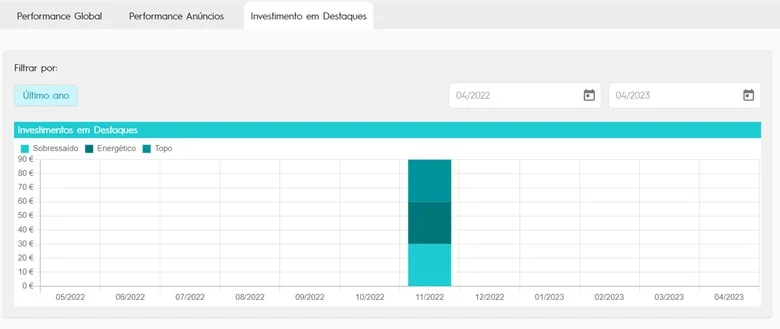

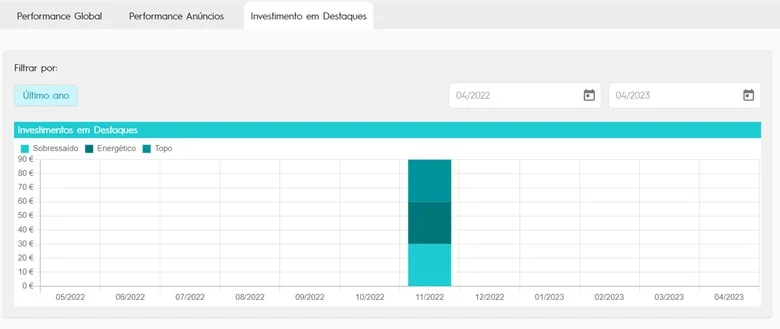

Featured investments:

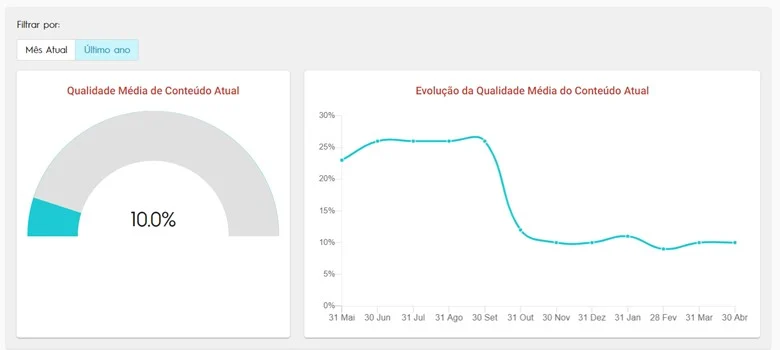

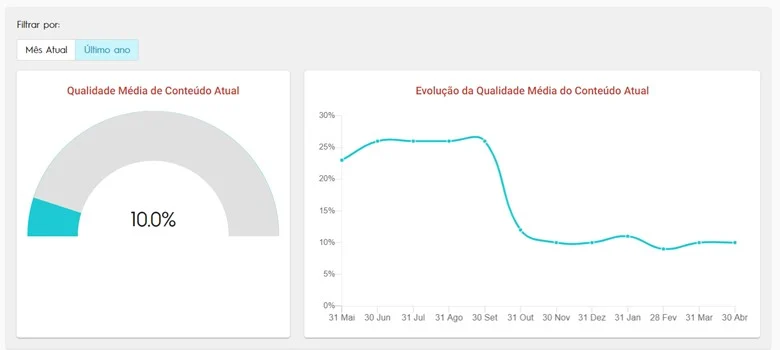

Overall quality of the ads:

Payments:

Imovirtual

From an architectural point of view, the main challenge was to create a structure capable of handling huge daily amounts of data, while maintaining its integrity and resilience, since there is a very small hourly window to recover this data in the event of a disaster.

The solution involved the use of Microsoft Azure Data Factory, programmed to ingest raw data and subsequently transform it in two ways:

However, that was only part of the challenge. In order to meet expectations and control such a volume of data, the application would need to achieve a high speed when made available to the client. This speed was achieved by using techniques such as in-memory cache.

The cache policy takes into consideration that after the user accesses the platform for the first time, most of its data becomes available in memory and all subsequent accesses do not require the database, making the response highly efficient.

From a security point of view, Imovirtual has strict access and validation rules. In order to deliver this project in production, penetration tests were performed and the solution was adapted to the security policies, by using security vaults to store secrets, passwords and connections to the database.

Note: the daily imports are large in relation to the total amount of data stored, however many tables are crunched daily and recreated. For this reason, the total amount of data stored is not proportional to the daily imports.

Scrum methodology.

Professional real estate agents now have at their disposal a complete platform where they can see all the statistics of their listings.

It’s a detailed analysis based on indicators and graphics of historical data, allowing decisions to be made based on performance. It is also possible to obtain the history of payments and investments, as well as official reports of the real estate market per region.

3-months project. The team included:

Homepage:

Ads performance:

Overall performance:

Featured investments:

Overall quality of the ads:

Payments: